Inference Speed: The Invisible Constraint Defining the Success of Real-Time AI

At CES 2026, a live spatial intelligence demo revealed a critical constraint shaping real-time AI: inference speed. As AI moves beyond language and into physical space, infrastructure—not models or narratives—will determine what systems can actually do at scale.

AI Is Moving Beyond Language—and Hacking the Grammar of Space

A turning point for spatial intelligence, observed at CES 2026

The exchange between Lisa Su, CEO of AMD, and Fei-Fei Li, CEO of World Labs, on the CES 2026 keynote stage marked more than a product demonstration. It signaled a structural transition in artificial intelligence.

If the past two years were defined by an obsession with large language models—systems designed to generate and reason over text—CES 2026 made clear that AI is now moving into a different phase: one in which machines infer, preserve, and operate within the physical structure of the world itself. This shift is increasingly described as spatial intelligence.

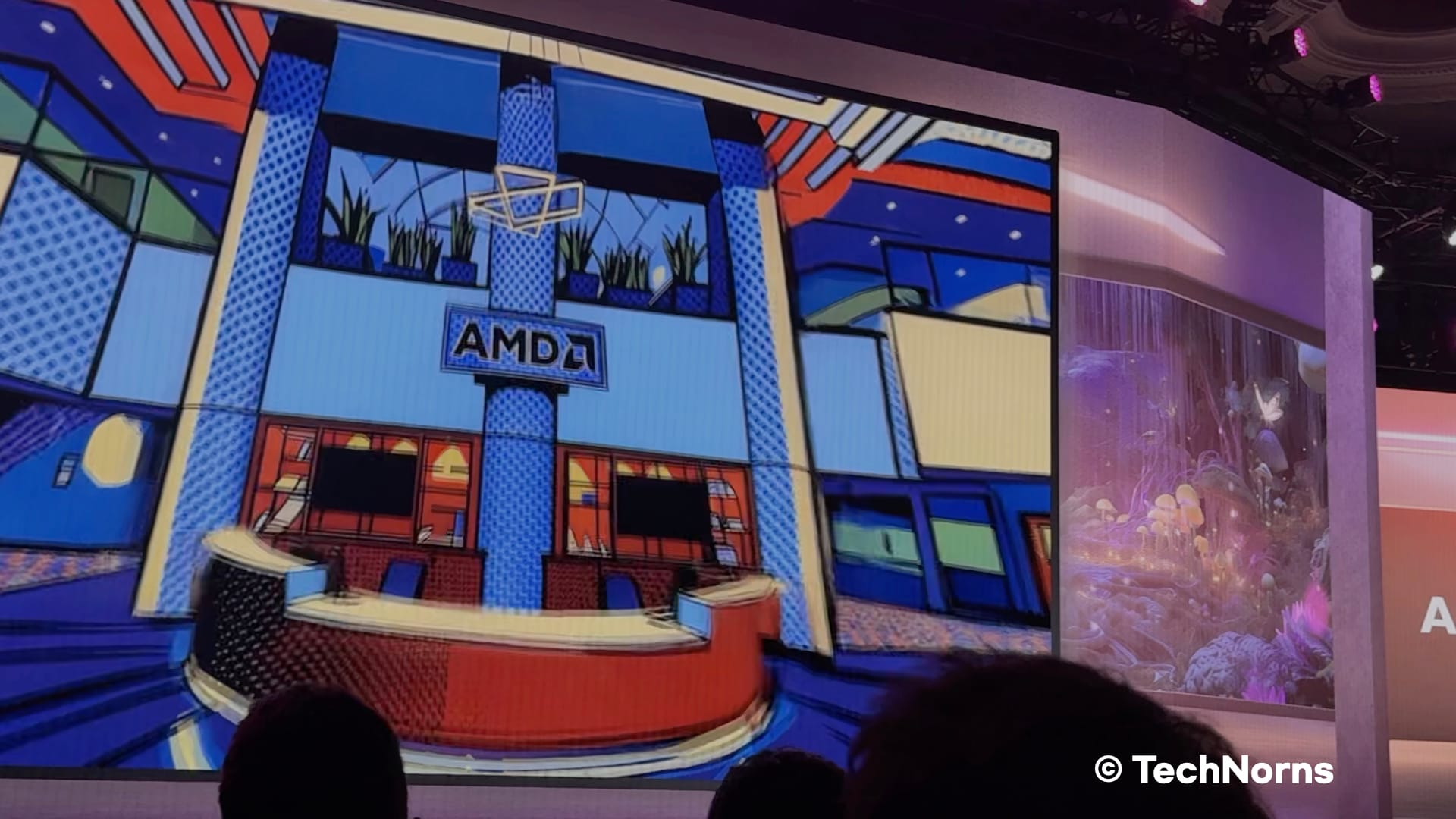

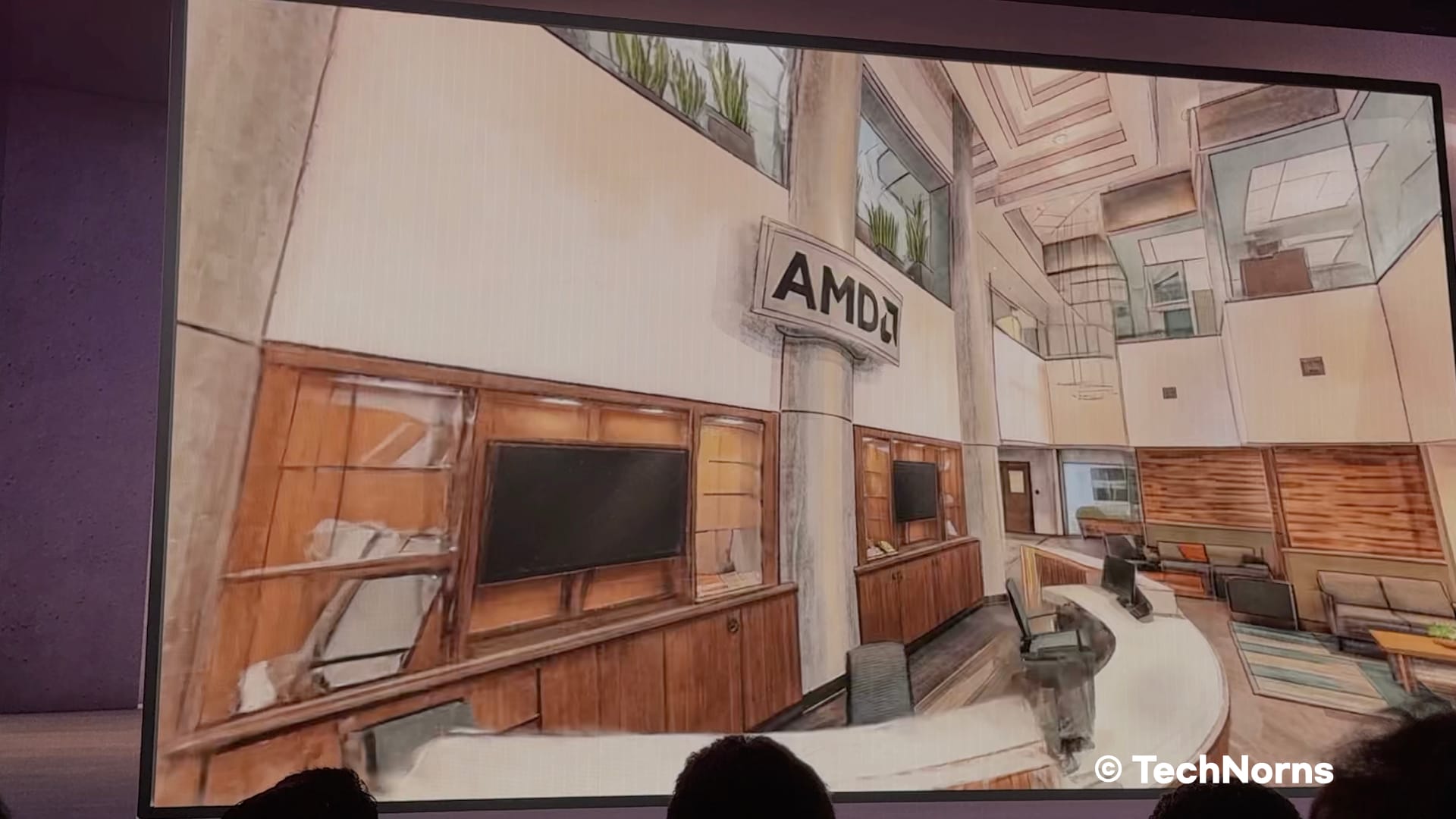

Using only smartphone photos of AMD’s Silicon Valley office, World Labs’ system reconstructed the space as a navigable 3D environment within minutes. The room’s layout remained intact while its visual style shifted freely—from Egyptian motifs to fantasy-inspired designs. The point was not aesthetics. It was evidence that AI systems are beginning to reason about space as a coherent, rule-governed environment.

From Image Generation to Spatial Reasoning

The core technical achievement on display was geometric consistency. Unlike conventional generative models that produce visually plausible images without internal structure, World Labs’ model inferred the spatial relationships between windows, doors, furniture, depth, and scale—and preserved those relationships across transformations.

Changing the style of a room without collapsing its underlying geometry requires the model to internalize spatial constraints rather than merely mimic surface appearance. In practical terms, this means the AI is no longer just “seeing” an image. It is constructing a world model that remains stable as users move through it.

This is a meaningful departure from text-first interfaces. The system is no longer limited to describing environments—it can generate spaces that remain coherent under interaction.

The Collapse of Creation Costs

Historically, producing this level of 3D modeling required specialized teams and months of work. At CES, that process was reduced to minutes.

This compression has consequences beyond creative industries. When spatial modeling becomes accessible through consumer-grade hardware, the bottleneck shifts away from technical execution and toward human intent. Design, simulation, and experimentation move faster not because tools are marginally better, but because the cost of iteration collapses.

Games, architecture, interior design, and digital content creation are obvious beneficiaries. But the deeper impact lies elsewhere.

Why Spatial Intelligence Matters to the Physical Economy

The most immediate applications are not virtual worlds but robotics and autonomous systems.

Robots and self-driving vehicles depend on exposure to rare and dangerous edge cases—scenarios that are difficult, expensive, or unsafe to reproduce in the real world. Spatial intelligence allows engineers to generate high-fidelity digital twins of real environments from limited visual data, then systematically vary conditions: weather, lighting, obstacles, or layout.

Training that once required extensive real-world testing can now be conducted at scale in simulation. Optimization increasingly happens before hardware is deployed, not after failure occurs.

In this context, spatial intelligence is not a visualization tool. It is a productivity multiplier for physical systems.

The Invisible Constraint: Inference Speed

During the demonstration, Fei-Fei Li emphasized a point that is easy to overlook: inference speed determines realism.

For a spatial model to remain coherent while a user navigates and edits it in real time, massive amounts of computation must occur without latency. Any delay breaks the illusion—and more importantly, breaks usability.

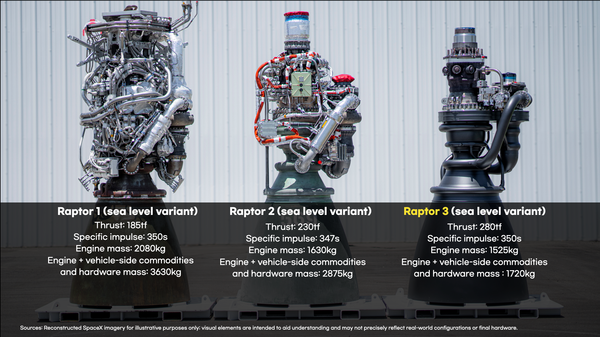

This is where infrastructure matters. World Labs’ Marble model was shown running on AMD’s Instinct MI325X platform using the ROCm software stack. With 256GB of HBM3E memory and bandwidth approaching 6TB per second, the system is designed to handle the data movement and parallelism required by spatial workloads.

Hardware, in this framing, is not an optimization detail. It is the limiting factor.

The demonstration was compelling. The harder question is whether AMD can make this workflow routine, not exceptional.

Whether this becomes a routine workflow will depend on execution over time.

Infrastructure, Not Declarations, Defines the Future

When Greg Brockman argued earlier at CES that future GDP growth will be driven by access to compute, Fei-Fei Li’s demonstration showed what that compute enables: systems that model, simulate, and operate within physical reality.

AI is no longer confined to answering questions. It is becoming a system that constructs and manages environments—digital representations that increasingly guide real-world action.

For leaders, the implication is straightforward. The next phase of AI advantage will not be determined by who articulates the most compelling vision, but by who builds and operates the infrastructure capable of running these models at scale.

The future does not arrive through declarations. It only functions on top of prepared infrastructure.