Why Did Jensen Huang Go to SpaceX?

NVIDIA’s DGX Spark visit to SpaceX signals a deeper convergence between AI infrastructure and launch-scale industrial systems, linking computational density with orbital ambition.

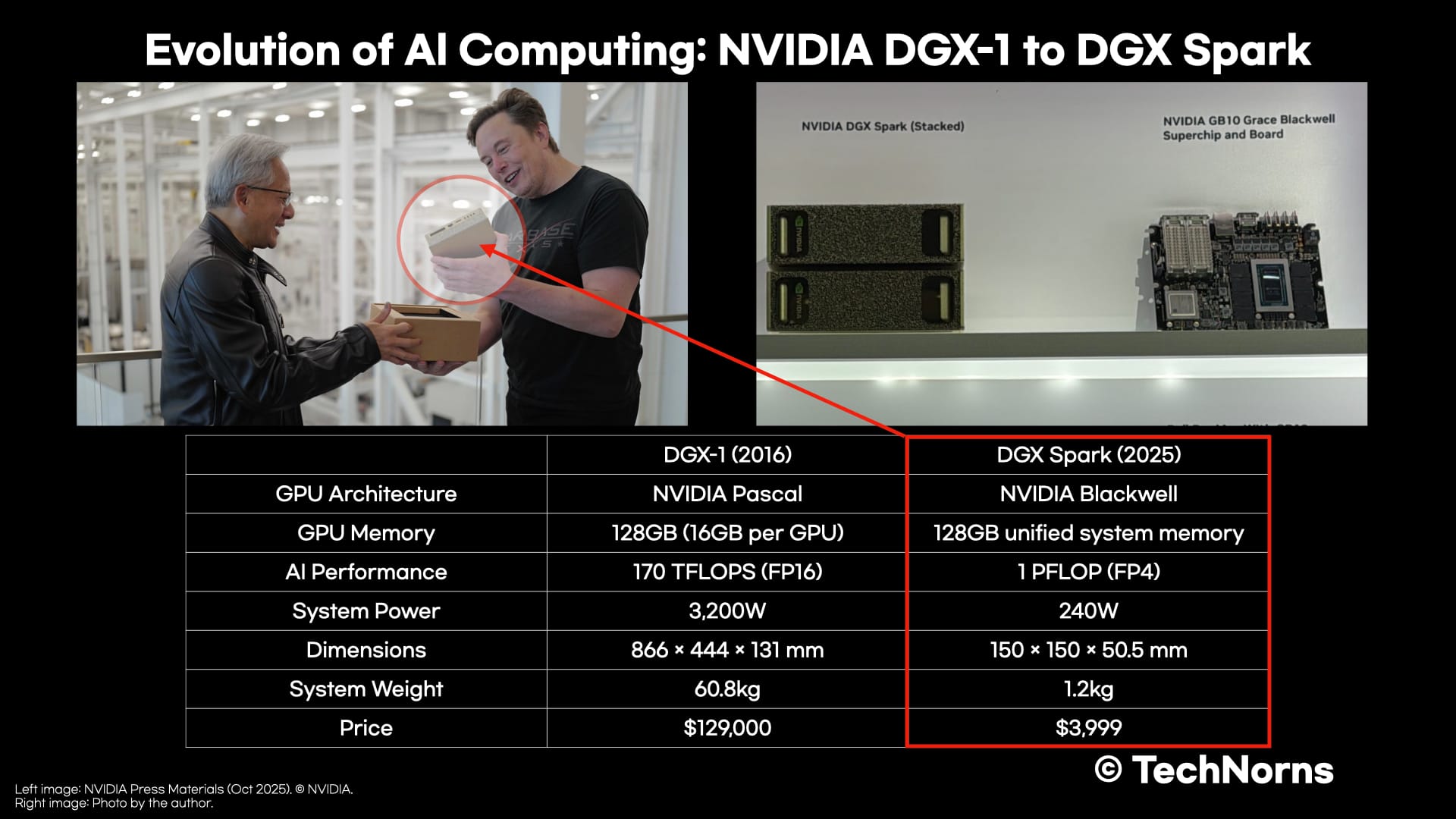

In October 2025, Nvidia CEO Jensen Huang traveled to Starbase to meet Elon Musk. He did not come for a courtesy visit. He personally delivered DGX Spark, which he described as “the world’s smallest AI supercomputer.”

The message was not about size. It was about control over the physical foundations of artificial intelligence.

DGX Spark delivers petaflop-class AI performance at ultra-low precision, integrates 128GB of unified system memory, and is capable of running inference on models approaching 200 billion parameters. It is not a symbolic miniature. It is a condensed data center.

But during the visit, Huang did something more deliberate. He reminded Musk that Nvidia had supplied the original DGX-1 system to OpenAI in 2016.

That reference was strategic.

Nvidia Was There Before the Boom

DGX-1 marked the beginning of vertically integrated, AI-optimized supercomputing systems. It combined Pascal GPUs, high-speed interconnect, and a tightly coupled software stack into a single machine built explicitly for deep learning.

This was before generative AI became a global narrative. Nvidia did not chase the AI boom; it built the infrastructure that made it possible.

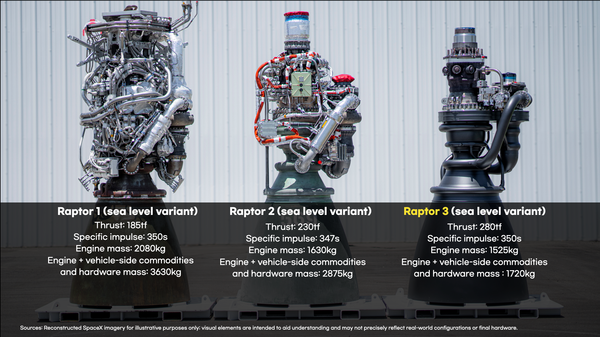

At the time, Baidu’s chief scientist Andrew Ng compared AI systems to rockets: performance scaled with size. More compute meant greater capability.

The comparison was literal.

In 2008, IBM’s Roadrunner became the first system to break the 1 petaflop barrier. It consumed 2.345 megawatts of power—comparable to thousands of homes—and required 296 racks filling a warehouse-scale facility.

Seventeen years later, Nvidia compressed petaflop-class inference performance into a 1.2-kilogram desktop system.

DGX Spark does not compete with Roadrunner in scientific FP64 workloads. That would be the wrong comparison. Roadrunner was built for high-precision numerical simulation. DGX Spark is optimized for AI inference using ultra-low precision formats such as FP4.

The architectural shift is what matters.

Traditional supercomputers maximized numerical accuracy. Modern AI systems trade precision for throughput and efficiency. The result is radically higher performance per watt for inference workloads. Intelligence is no longer gated by warehouse-scale infrastructure.

The Real Breakthrough: Integration

Raw FLOPS are not the core story. Integration is.

Conventional systems separate CPU and GPU memory pools. Data must move through PCIe, introducing bandwidth and latency constraints. DGX Spark adopts a unified memory architecture, allowing CPU and GPU to operate within a shared 128GB memory space.

NVLink-C2C extends bandwidth beyond traditional PCIe-based designs, reducing data movement overhead and improving efficiency for large model inference.

This architectural integration enables substantial models to run without full hyperscale infrastructure. The barrier to experimentation falls. Development shifts closer to the edge.

The scale of intelligence is no longer tied exclusively to the scale of buildings.

Why Starbase Matters

Huang’s choice of location was not accidental.

Starbase is not simply a rocket factory. It is an industrial campus built around extreme energy density, advanced thermal management, vertical integration, and high-throughput manufacturing. It represents a different frontier of physical scaling.

By referencing DGX-1 while delivering DGX Spark, Huang connected two arcs:

- The compression of computational density.

- The expansion of industrial and transport capacity.

Nvidia supplies infrastructure across the AI ecosystem—OpenAI, xAI, Anthropic, Google, Meta. Musk’s strained relationship with OpenAI’s current leadership is well known. Huang’s visit underscored Nvidia’s position not as a factional player, but as the foundational layer beneath them all.

Constrained by Physics

The deeper alignment between AI and space launch is structural.

Both industries are now constrained by physics.

AI data centers are limited by:

- Power density

- Cooling capacity

- Grid constraints

- Physical footprint

Launch systems are constrained by:

- Energy density

- Thermal loads

- Structural scaling

- Manufacturing throughput

The bottleneck is no longer theoretical. It is physical.

DGX Spark demonstrates how computational density can collapse inward. Starship represents the opposite movement: industrial capacity scaling outward.

The meeting at Starbase made that symmetry visible.

It was not merely the delivery of a machine. It was a visual statement that the next phase of AI will not be determined only by algorithms, but by energy systems, cooling architectures, and transport capacity.

Compute is becoming an industrial problem.

And industry is governed by physics.